Random benchmark for the day

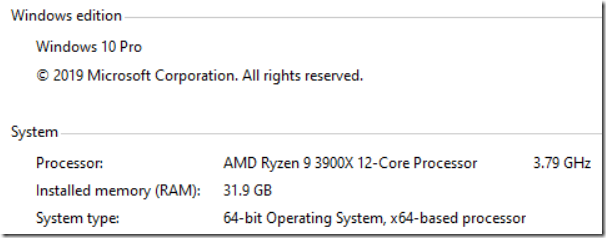

One of our developers recently got a new machine, and we were excited to see what kind of performance we can get out of it. It is an AMD Ryzen 9, 12 cores @ 3.79 Ghz with 32 GB of RAM. The disk used was Samsung SSD 970 EVO Plus 500 GB.

One of our developers recently got a new machine, and we were excited to see what kind of performance we can get out of it. It is an AMD Ryzen 9, 12 cores @ 3.79 Ghz with 32 GB of RAM. The disk used was Samsung SSD 970 EVO Plus 500 GB.

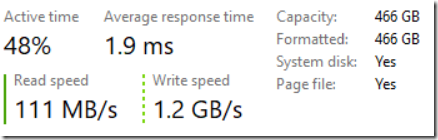

This isn’t an official benchmark, to be fair. This is us testing on how fast the machine is. As such, this is a plain vanilla Windows 10 machine, with no effort to perform any optimizations. Our typical benchmark involves loading all of stack overflow into RavenDB, so we’ll have enough data to work with. Here is what things looked like midway through:

As you can see, the write speed we are able to get is impressive.

We were able to insert all of stack overflow, a bit over 52GB in 3 and a half minutes, at a rate of about 300 MB / sec sustained.

Then we tested indexing.

- Map/Reduce on users by registration month (source ~6 million users) – under a minute.

- Full text search on users – two and a half minutes.

- Simple index on questions by tag (over 18 million questions & answers) – 11.5 minutes.

- Full text search on all questions and answers – 33 minutes.

Remember, these numbers are for indexing everything for the first time. It is worth noting that RavenDB dedicates a single thread per index, to avoid hammering the system with too much work. That means that this indexes were building concurrently with one another.

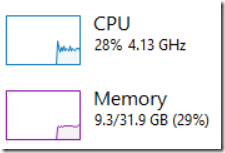

Here is the system utilization while this was going on:

Finally, we tested some other key scenarios (caching disabled in all of them):

- Reading documents (small working set, representing recent questions) – 243,371 req / ses at 512 MB / sec.

- Full random reads (data size exceed memory, so disk hits) – 15,393.66 res / sec at 13.4 MB / sec.

These two are really interesting numbers. The first one, we generate queries to specific documents over an over (with no caching). That means that RavenDB is able to answer them from memory directly. The idea is to simulate a common scenario of a working set that can fit entirely in memory.

The second one is different. The data size on disk is 52 GB and we have 32 GB available for us. We generate random queries here, for different documents each time. We ensure that the queries cannot be served directly from memory and that RavenDB will have to hit the disk. As you can see, even under this scenario, we are doing fairly well. As an aside, it helps that the disk is good. We tried running this on HDD once. The results were… not nice.

The final test we did was for writes, writing a small document to RavenDB. We got 118,000 writes/sec on a sustained basis, with about 32MB / sec in data throughput. Note that we can do more, but playing with the system configuration, but we are already at high enough rate that it probably wouldn’t matter.

All in all, that is a pretty nice machine.

Woah, already finished? 🤯

If you found the article interesting, don’t miss a chance to try our database solution – totally for free!