Sharing data in a Byzantine system

RavenDB is typically deployed as a set of trusted servers. The network is considered to be hostile, which is why encrypt everything over the wire and using X509 certificates for mutual authentication, but once the connection is established, we trust the other side to follow the same rules as we do.

To clarify, I’m talking here about trust between nodes, not a client connected to RavenDB. These are also authenticated using X509 certificate, but they are limited to the access permissions assigned to them. Nodes in a cluster fully trust one another and need to do things like forward commands accepted by one node to another one. That requires that the second node trust that the first node properly authenticated the client and won’t pass operations that the client has no authority for.

Table of contents

Use Case 1: A Database System for Multiple Medical Clinics

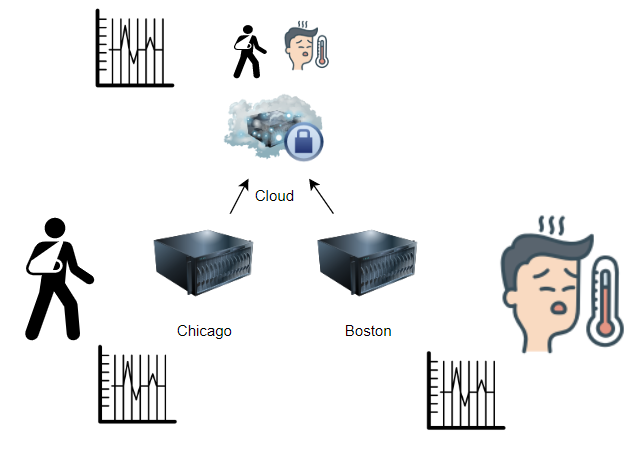

I think that a real use case might make things more concrete. Let’s assume that we have a set of clinics, with the following distribution of data.

We have two clinics, one in Boston and one in Chicago, as well as a cloud system. The rules of the system are as follows:

- Data from each clinic is replicated to the cloud.

- Data from the cloud is replicated to the clinics.

- Data from a clinic may only be at the clinic or in the cloud.

- A clinic cannot get (or modify) data that didn’t came from the clinic.

In this model, we have three distinct locations, and we presumably trust all of them (otherwise, would we put patient data on them?). There is a need to ensure that we don’t expose patient data from one clinic to another, but that is about it. Note that in terms of RavenDB topology, we don’t have a single cluster here. That wouldn’t make sense. To start with, we need to be able to operate the clinic when there is no internet connectivity. And we don’t want to pay with any avoidable latency even if everything is working fine. So in this case, we have three separate clusters, one in each location, and they are connected to one another using RavenDB’s multi master replication.

Use Case 2: A Database System Sharing with Outside Edge Points

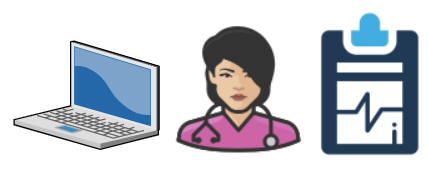

Let’s look at another model, however, in this case, we are still dealing with medical data, but instead of a clinic, we have to deal with a doctor making house calls:

In this case, we are still talking about private data, but we are no longer trusting the end device. The doctor may lose the laptop, they may have malware running on the machine or may be trying to do Bad Things directly. We want to be able to push data to the doctor’s machine and receive updates from the field.

RavenDB has some measures at the moment to handle this scenario. You need to only get some data from the cloud to the doctor’s laptop, and you want to push only certain things back to the cloud. You can use pull replication and ETL. to handle this scenario, and it will work, as long as you are willing to trust the end machine. Given the stringent requirement for medical data, it is not something out of bounds, actually. Full volume encryption, forbidding use of unknown software and a few other protections ensure that if the laptop is lost, the only thing you can do with it is repurpose the hardware. If we can go with that assumption, this is great.

However… we need to consider the case that our doctor is actually malicious.

When the Edge Point isn’t as Healthy as the Doctor Using It

So we need a something in the middle, between all our data and what can reside on that doctor’s machine. As it currently stands, in order to create the appropriate barrier between the doctor’s machine and the cloud, you’ll have to write your own sync code and apply any logic / authorization at that level.

Sync code is non trivial, mostly because of the number of edge cases you have to deal with and the potential for conflicts. This has already been solved by RavenDB, so having to write it again is not ideal as far as we are concerned.

What would you do?

Woah, already finished? 🤯

If you found the article interesting, don’t miss a chance to try our database solution – totally for free!