Production postmortem: Efficiency all the way to Out of Memory error

RavenDB is written in C#, and as such, uses managed memory. As a database, however, we need granular control of our memory, so we also do manual memory management.

One of the key optimizations that we utilize to reduce the amount of overhead we have on managing our memory is using an arena allocator. That is a piece of memory that we allocate in one shot from the operating system and operate on. Once a particular task is done, we can discard that whole segment in one shot, rather than try to work out exactly what is going on there. That gives us a proper scope for operations, which means that missing a free in some cases isn’t the end of the world.

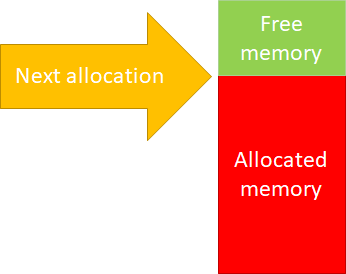

It also makes the code for RavenDB memory allocation super simple. Here is what this looks like:

Whenever we need to allocate more memory, we’ll just bump the allocator up. Initially, we didn’t even implement freeing memory, but it turns out that there are a lot of long running processes inside of RavenDB, so we needed to reuse the memory inside the same operation, not just between operations.

The implementation of freeing memory is pretty simple, as well. If we return the last item that we allocated, we can just drop the next allocation position by how many bytes were allocated. For that matter, it also allows us to do incremental allocations. We can ask for some memory, then increase the allocation amount on the fly very easily.

Here is a (highly simplified) example of how this works:

As you can see, there isn’t much there. A key requirement here is that you need to return the memory back in the reverse order of how you allocated it. That is usually how it goes, but what if it doesn’t happen?

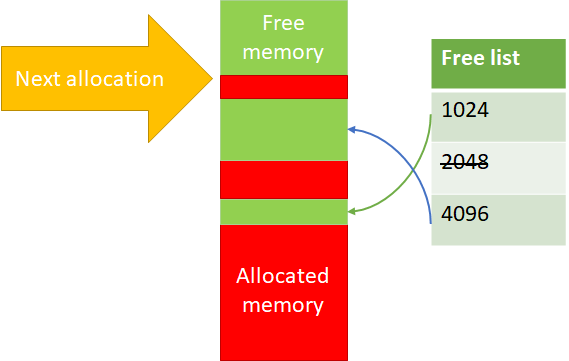

Well, then we can’t reuse the memory directly. Instead, we’ll place them in a free list. The actual allocations are done on powers of two, so that makes things easier. Here is what this actually looks like:

So if we free, but not from the top, we remember the location and can use it again. Note that for 2048 in the image above, we don’t have any free items.

I’m quite fond of this approach, since this is simple, easy to understand and has a great performance profile. But I wouldn’t be writing this blog post if we didn’t run into issues, now would I?

A customer reported high memory usage (to the point of memory exhaustion) when doing a certain set of operations. That… didn’t make any sense, to be honest. That was a well traveled code path, any issue there should have been long found out.

They were able to send us a reproduction and the support team was able to figure out what is going on. The problem was that the code in question did a couple of things, which altogether led to an interesting issue.

- It allocated and deallocated memory, but not always in the same order – this is fine, that is why we have the free list, after all.

- It extended the memory allocation it used on the fly – perfectly fine and an important optimization for us.

Give it a moment to consider how could these two operations together result in a problem…

Here is the sequence of events:

- Loop:

- Allocate(1024) -> $1

- Allocate(256) -> $2

- Grow($1, 4096) -> Success

- Allocate(128) -> $3

- Free($1) (4096)

- Free($3) (128)

- Free($2) (256)

What is going on here?

Well, the issue is that we are allocating a 1KB buffer, but return a 4KB buffer. That means that we add the returned buffer to the 4KB free list, but we cannot pull from that free list on allocation.

Once found, it was an easy thing to do (detect this state and handle it), but until we figured it out, it was quite a mystery.

Woah, already finished? 🤯

If you found the article interesting, don’t miss a chance to try our database solution – totally for free!