re: Writing a very fast cache service with millions of entries

I run into this article that talks about building a cache service in Go to handle millions of entries. Go ahead and read the article, there is also an associated project on GitHub.

I run into this article that talks about building a cache service in Go to handle millions of entries. Go ahead and read the article, there is also an associated project on GitHub.

I don’t get it. Rather, I don’t get the need here.

The authors seem to want to have a way to store a lot of data (for a given value of lots) that is accessible over REST. The need to be able to run 5,000 – 10,000 requests per second over this. And also be able to expire things.

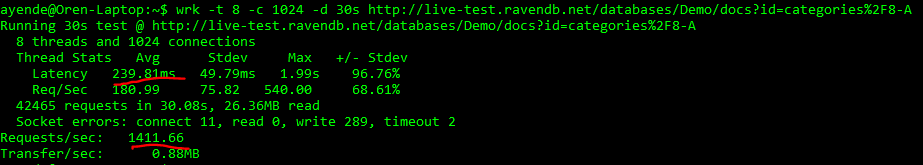

I decided to take a look into what it would take to run this in RavenDB. It is pretty late here, so I was lazy. I run the following command against our live-test instance:

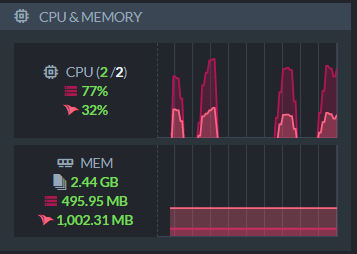

This say to create 1,024 connections and get the same document. On the right you can see the live-test machine stats while this was running. It peaked at about 80% CPU. I should note that the live-test instance is pretty much the cheapest one that we could get away with, and it is far from me.

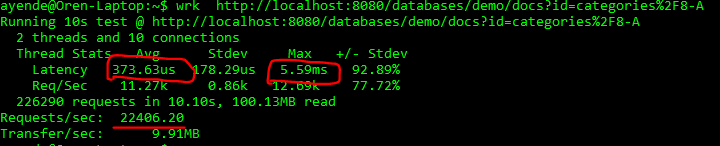

Ping time from my laptop to the live-test is around 230 – 250 ms. Right around the numbers that wrk is reporting. I’m using 1,024 connections here to compensate for the distance. What happens when I’m running this locally, without the huge distance?

So I can do more than 22,000 requests per second (on a 2016 era laptop, mind) with max latency of 5.5 ms (which the original article called for average time). Granted, I’m simplifying things here, because I’m checking a single document and not including writes. But 5,000 – 10,000 requests per second are small numbers for RavenDB. Very easily achievable.

RavenDB even has the @expires feature, which allows you to specify a time a document will automatically be removed.

The nice thing about using RavenDB for this sort of feature is that millions of objects and gigabytes of data are not something that are of particular concern for us. Raise that by an orders of magnitude, and that is our standard benchmark. You’ll need to raise it by a few more orders of magnitudes before we start taking things seriously.

Woah, already finished? 🤯

If you found the article interesting, don’t miss a chance to try our database solution – totally for free!