Struct memory layout and memory optimizations

Consider a warehouse that needs to keep track of items. For the purpose of discussion, we have quite a few fields that we need to keep track of. Here is how this looks like in code:

And the actual Warehouse class looks like this:

The idea is that this is simply a wrapper to the list of items. We use a struct to make sure that we have good locality, etc.

The question is, what is the cost of this? Let’s say that we have a million items in the warehouse. That would be over 137MB of memory. In fact, a single struct instance is going to consume a total of 144 bytes.

That is… a big struct, I have to admit. Using ObjectLayoutInspector I was able to get the details on what exactly is going on:

Type layout for 'WarehouseItem' Size: 144 bytes. Paddings: 62 bytes (%43 of empty space)

As you can see, there is a huge amount of wasted space here. Most of which is because of the nullability. That injects an additional byte, and padding and layout issues really explode the size of the struct.

Here is an alternative layout, which conveys the same information, much more compactly. The idea is that instead of having a full byte for each nullable field (with the impact on padding, etc), we’ll have a single bitmap for all nullable fields. Here is how this looks like:

If we look deeper into this, we’ll see that this saved a lot, the struct size is now 96 bytes in size. It’s a massive space-savings, but…

Type layout for ‘WarehouseItem’

Size: 96 bytes. Paddings: 24 bytes (%25 of empty space)

We still have a lot of wasted space. This is because we haven’t organized the struct to eliminate padding. Let’s reorganize the structs fields to see what we can achieve. The only change I did was re-arrange the fields, and we have:

And the struct layout is now:

Type layout for ‘WarehouseItem’ Size: 72 bytes. Paddings: 0 bytes %0 of empty space 0 11: Dimensions ProductDimensions 12 bytes 0 3: Single Length 4 bytes 4 7: Single Width 4 bytes 8 11: Single Height 4 bytes 12 15: Single AlcoholContent 4 bytes 16 23: Int64 ExternalSku 8 bytes 24 31: TimeSpan ShelfLife 8 bytes 32 39: DateTime ProductionDate 8 bytes 40 47: DateTime ArrivalDate 8 bytes 48 55: DateTime LastStockCheckDate 8 bytes 56 59: Single Weight 4 bytes 60 63: Int32 Quantity 4 bytes 64 67: Int32 RgbColor 4 bytes 68: Boolean Fragile 1 byte 69: Boolean IsHazardous 1 byte 70 71: UInt16 nullability 2 bytes

We have no wasted space, and we are 50% of the previous size.

We can actually do better, note that Fragile and IsHazarous are Booleans, and we have some free bits on _nullability that we can repurpose.

For that matter, RgbColor only needs 24 bits, not 32. Do we need alcohol content to be a float, or can we use a byte? If that is the case, can we shove both of them together into the same 4 bytes?

For dates, can we use DateOnly instead of DateTime? What about ShelfLife, can we measure that in hours and use a short for that (giving us a maximum of 7 years)?

After all of that, we end up with the following structure:

And with the following layout:

0 3: Int32 dayNumber 4 bytes 0 3: Int32 dayNumber 4 bytes 0 3: Int32 dayNumber 4 bytes Type layout for ‘WarehouseItem’ Size: 48 bytes. Paddings: 0 bytes %0 of empty space 0 11: Dimensions ProductDimensions 12 bytes 0 3: Single Length 4 bytes 4 7: Single Width 4 bytes 8 11: Single Height 4 bytes 12 15: Single Weight 4 bytes 16 23: Int64 ExternalSku 8 bytes 24 27: DateOnly ProductionDate 4 bytes 28 31: DateOnly ArrivalDate 4 bytes 32 35: DateOnly LastStockCheckDate 4 bytes 36 39: Int32 Quantity 4 bytes 40 43: Int32 rgbColorAndAlcoholContentBacking 4 bytes 44 45: UInt16 nullability 2 bytes 46 47: UInt16 ShelfLifeInHours 2 bytes

In other words, we are now packing everything into 48 bytes, which means that we are one-third of the initial cost. Still representing the same data. Our previous Warehouse class? It used to take 137MB for a million items, it would now take 45.7 MB only.

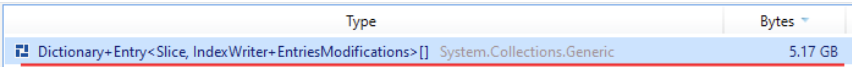

In RavenDB’s case, we had the following:

That is the backing store of the dictionary, and as you can see, it isn’t a nice one. Using similar techniques we are able to massively reduce the amount of storage that is required to process indexing.

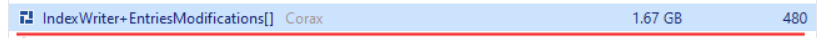

Here is what this same scenario looks like now:

But we aren’t done yet , there is still more that we can do.

Woah, already finished? 🤯

If you found the article interesting, don’t miss a chance to try our database solution – totally for free!