OLAP ETL Task

--

-

The OLAP ETL task is an ETL process that converts RavenDB data to the Apache Parquet file format, and sends it to one or more of these destinations:

- Amazon S3

- Amazon Glacier

- Microsoft Azure

- Google Cloud Platform

- File Transfer Protocol

- Local storage

-

This page explains how to create an OLAP ETL task using the studio. To learn more about OLAP ETL tasks, and how to create one using the client API, see Ongoing Tasks: OLAP ETL.

-

In this page:

Create an OLAP ETL Task

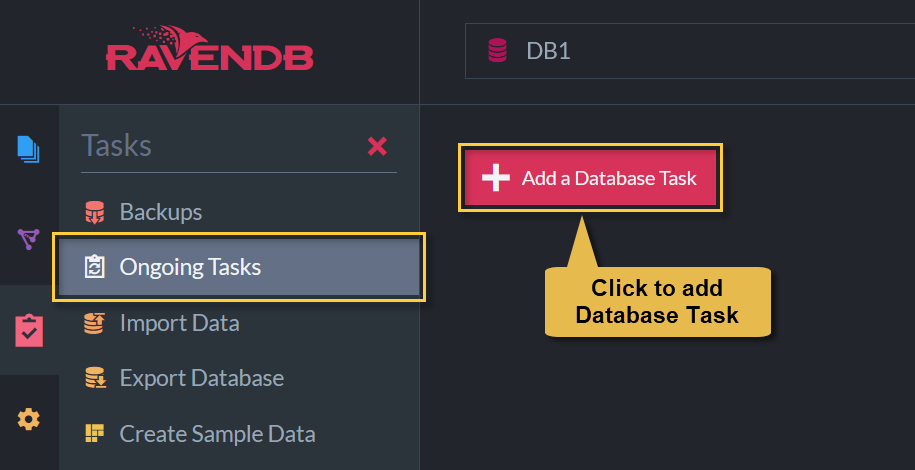

To begin creating your OLAP ETL task:

Ongoing tasks view

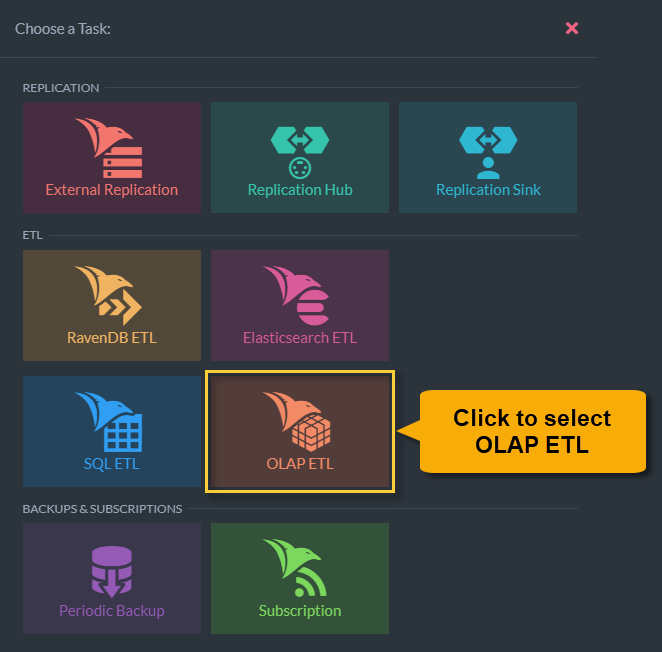

Task selection view

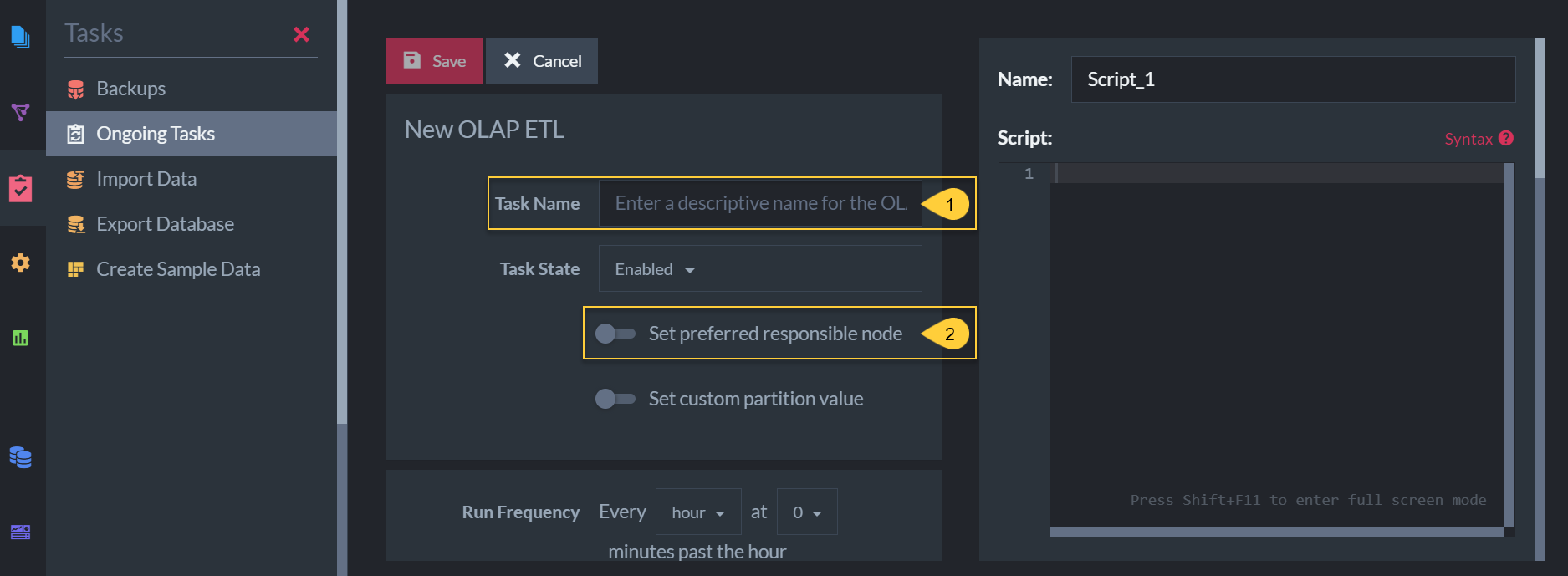

Define an OLAP ETL Task

New OLAP ETL task view

- The name of this ETL task (optional).

- Choose which of the cluster nodes will run this task (optional).

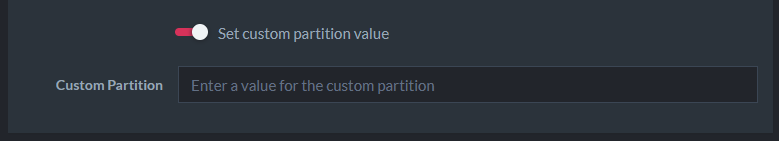

Custom Partition Value

Custom partition value

- A custom partition can be defined to differentiate parquet file locations when using the same connection string in multiple OLAP ETL tasks.

- The custom partition name is defined inside the transformation scripts.

- The custom partition value is defined in the input box above.

- The custom partition value is referenced in the transform script as

$customPartitionValue. - A parquet file path with custom partition will have the following format:

{RemoteFolderName}/{CollectionName}/{customPartitionName=$customPartitionValue} - Learn more in Ongoing Tasks: OLAP ETL.

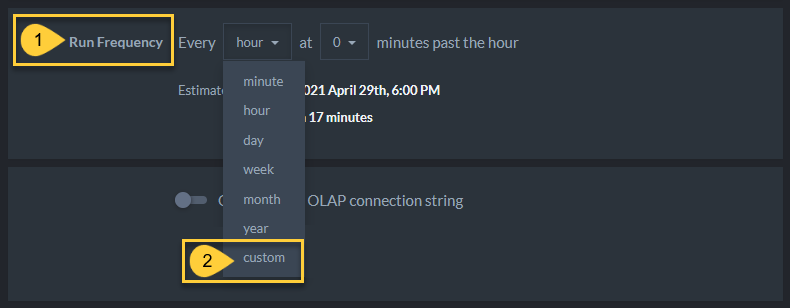

Run Frequency

Task run frequency

-

Run Frequency

Select the exact timing and frequency at which this task should run from the dropdown menu.- The maximum frequency is once every minute.

- Custom

Select 'custom' from the dropdown menu to schedule the task using your own customized cron expression.

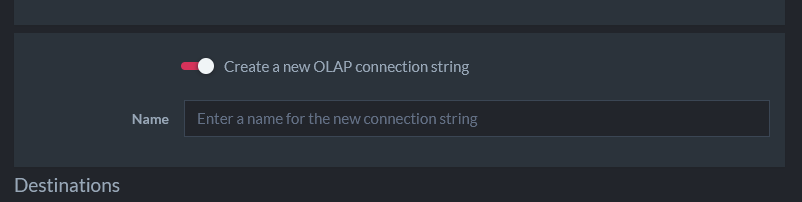

OLAP Connection String

- Select an existing connection string from the available dropdown or

OLAP connection string

-

Create a new OLAP connection string

Toggle on to create and define a new OLAP connection string for this ETL task- If you choose to create a new connection string you can enter its name and destination here.

- Multiple destinations can be defined.

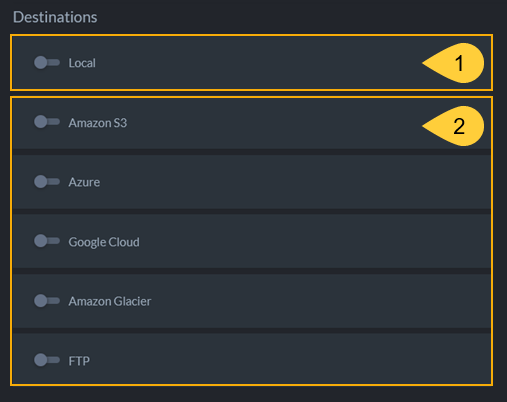

OLAP ETL Destinations

Select one or more destinations from this list. Clicking each toggle reveals further fields and configuration options for each destination.

OLAP ETL destinations

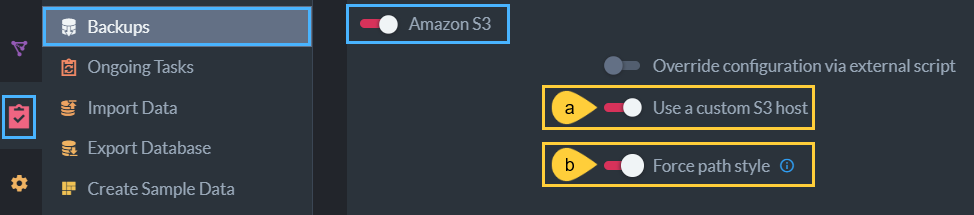

If you use an Amazon S3 custom host:

-

a- Use a custom S3 host

Toggle to provide a custom server URL. -

b- Force path style

Toggle to change the default S3 bucket path convention on your custom Amazon S3 host.

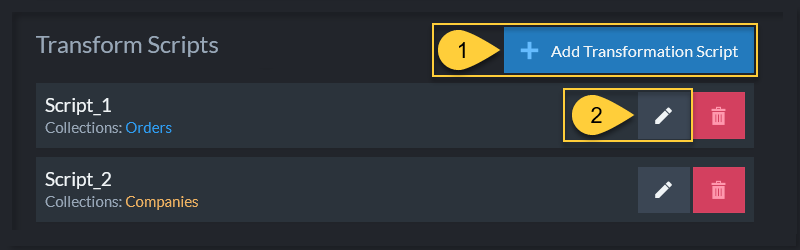

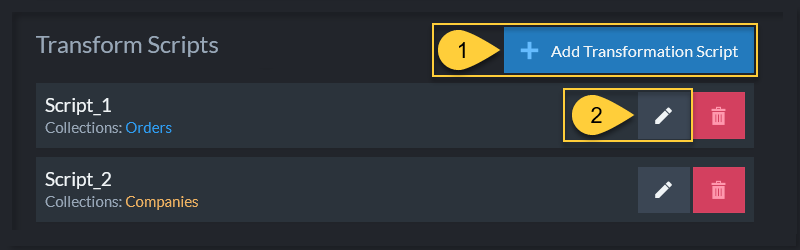

Transform Scripts

You can edit, delete or add to the list of existing transform scripts.

List of transform scripts

- Add a new transform script.

- Edit an existing transform script.

Create and edit transform Javascripts on the right side of the OLAP ETL Studio view.

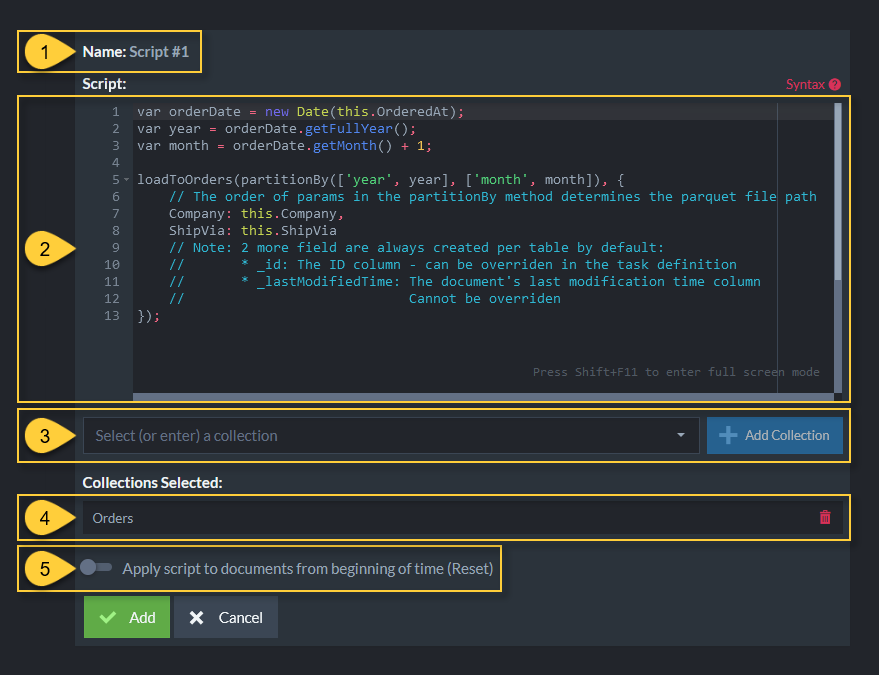

Define Transform Script

- Name

The script name is generated once the 'Add' button is clicked. The name of a script is always in the format: "Script #[order of script creation]". - The transform script

Learn more about transformation scripts. - Select a collection

(or enter a new collection name) on which this script will operate. - Collections Selected

The selected collection names on which the script operates. -

Apply script to documents from beginning of time (Reset)

- If selected, this script will operate on all existing documents in the specified collections the first time the task runs.

- When not selected, this script operates only on new documents.

Every parquet table that is created by a transform script includes two columns that aren't specified in the script:

_id

Contains the source document ID. The default name used for this column is_id.

You can override this name in the task definition - see more below._lastModifiedTime

The value of thelast-modifiedfield in a document's metadata. Represented in unix time.

Override ID Column

Override ID column

These settings allow you to specify a different column name for the document ID column

in a parquet file. The default ID column name is _id.

- Add a new setting.

- Select the name of the parquet table for which you want to override the ID column.

- Select the name for the table's ID column.

- Click to add this setting.

- Click to edit this setting.